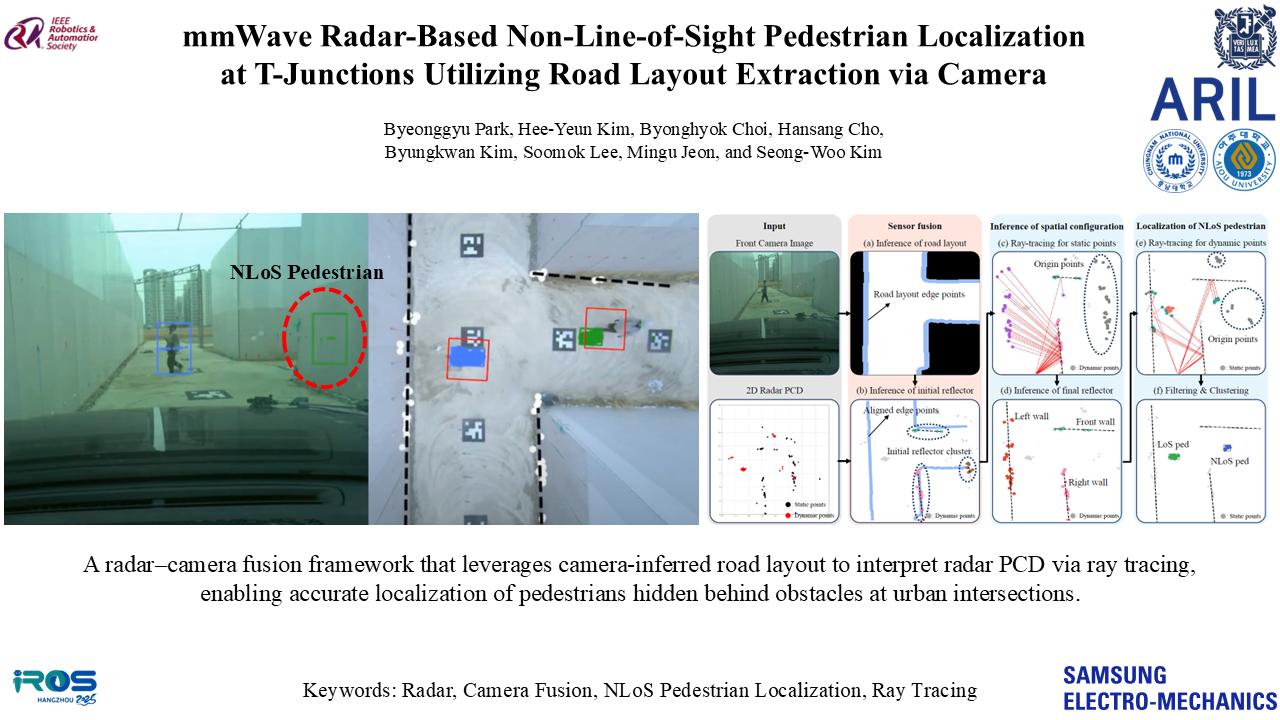

Introduction

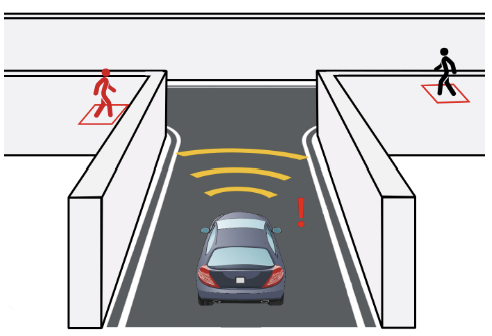

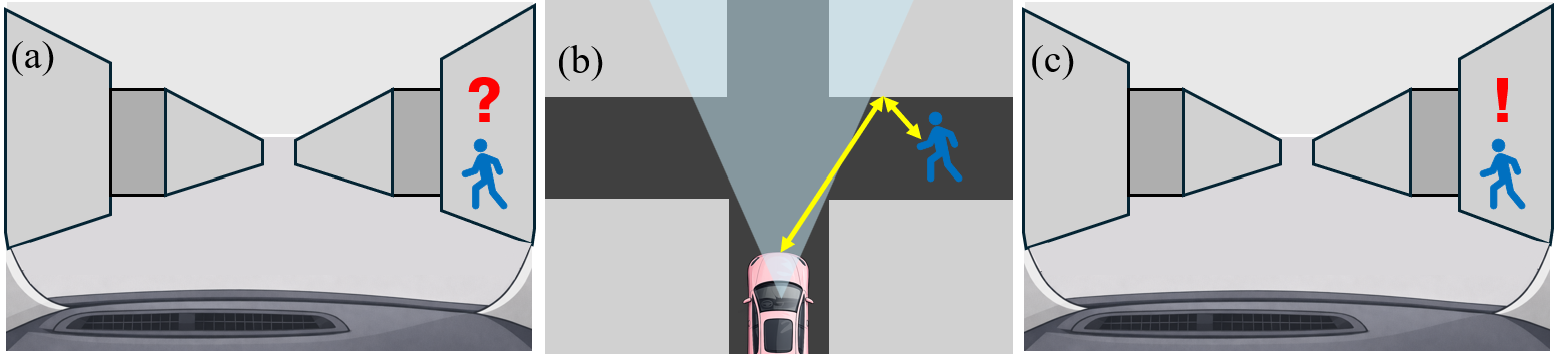

Illustration of NLOS pedestrian detection leveraging reflective wave propagation in urban alleys.

Perceiving pedestrians in NLOS regions is a critical yet formidable challenge for autonomous driving. In narrow urban environments, such as alleys or dense intersections, significant regions often fall outside the vehicle's direct Line-of-Sight (LOS). While these occluded areas make direct detection impossible, they can be indirectly observed by leveraging reflective wave propagation. By analyzing how signals bounce off surrounding structures, it is possible to obtain spatial evidence of hidden targets and infer their precise positions despite the absence of direct visibility.

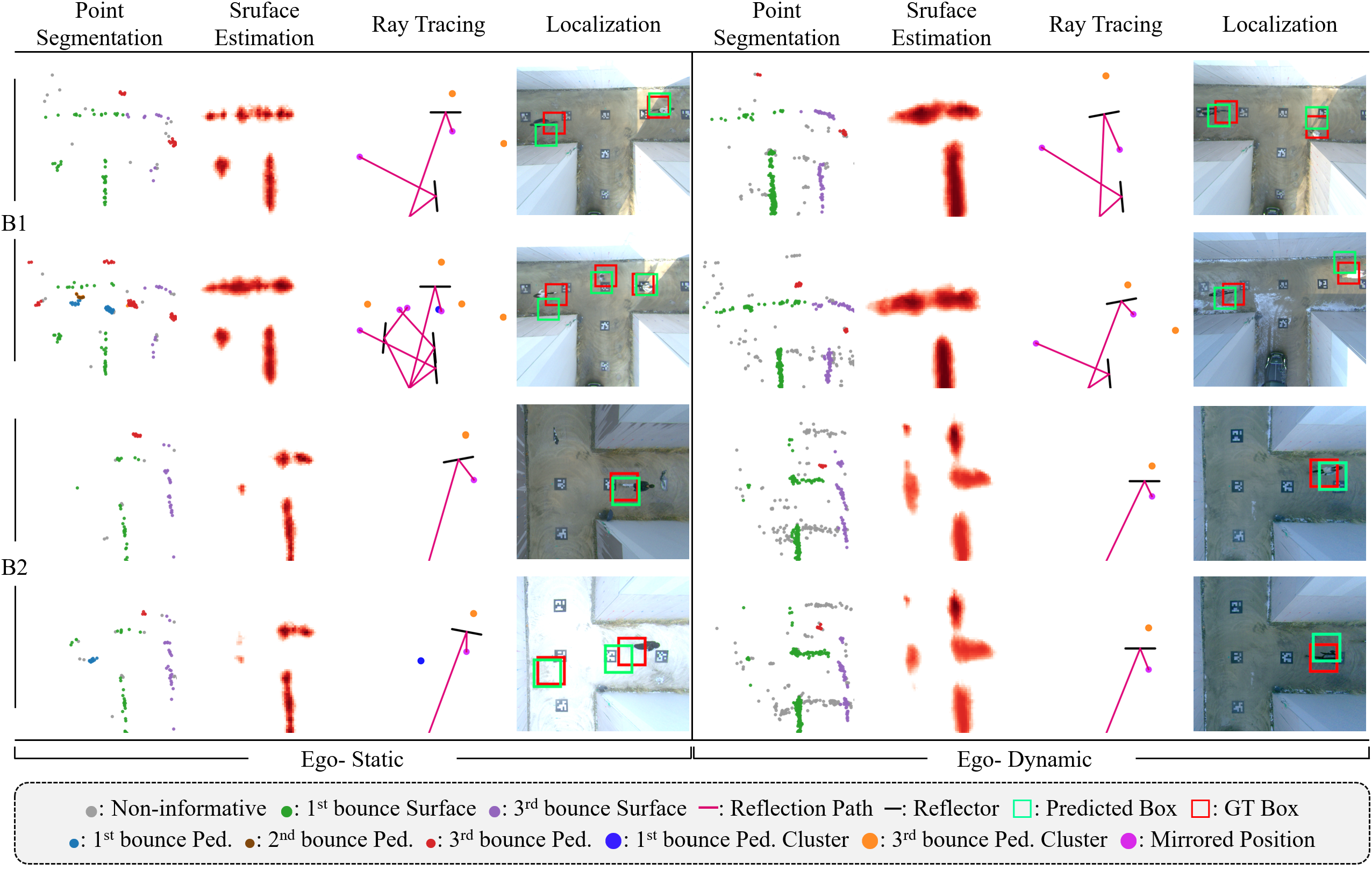

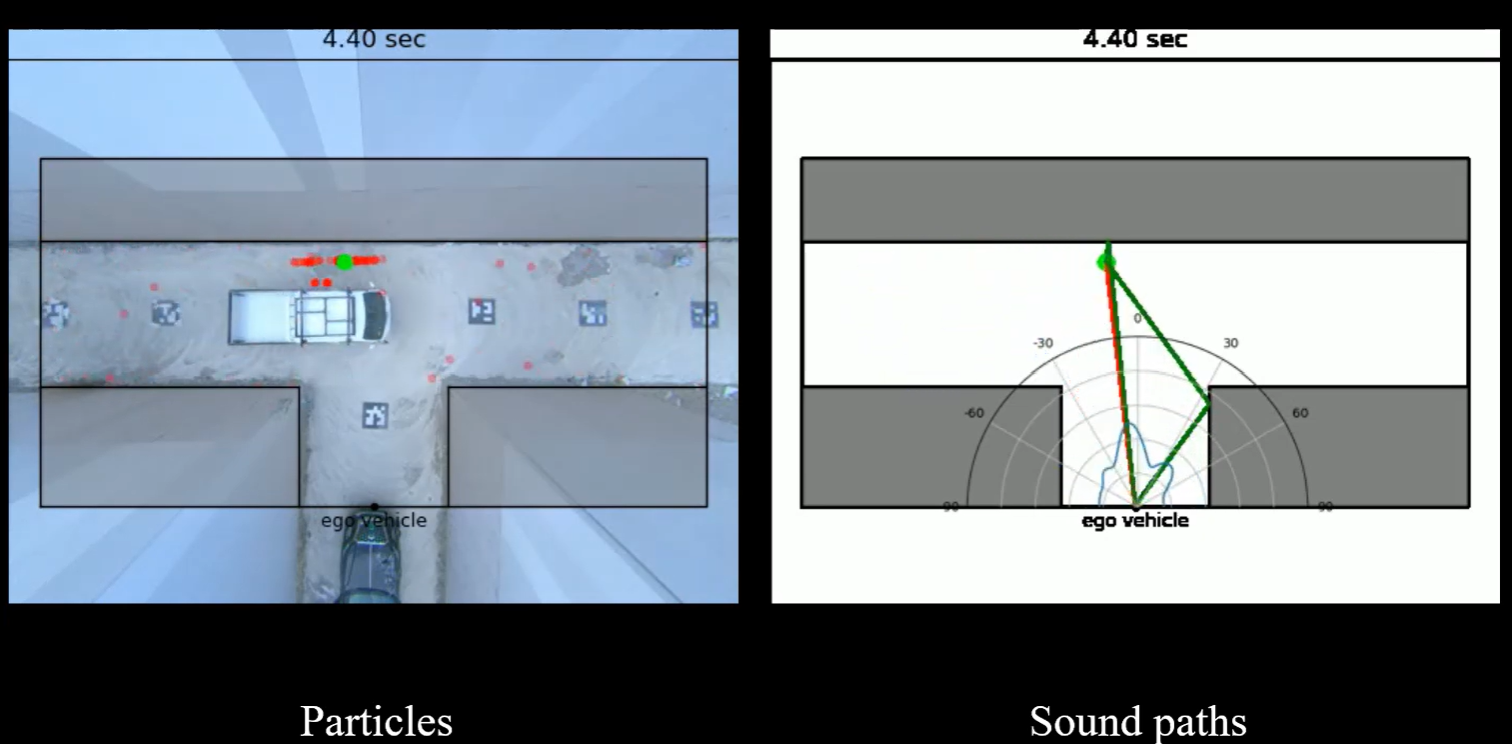

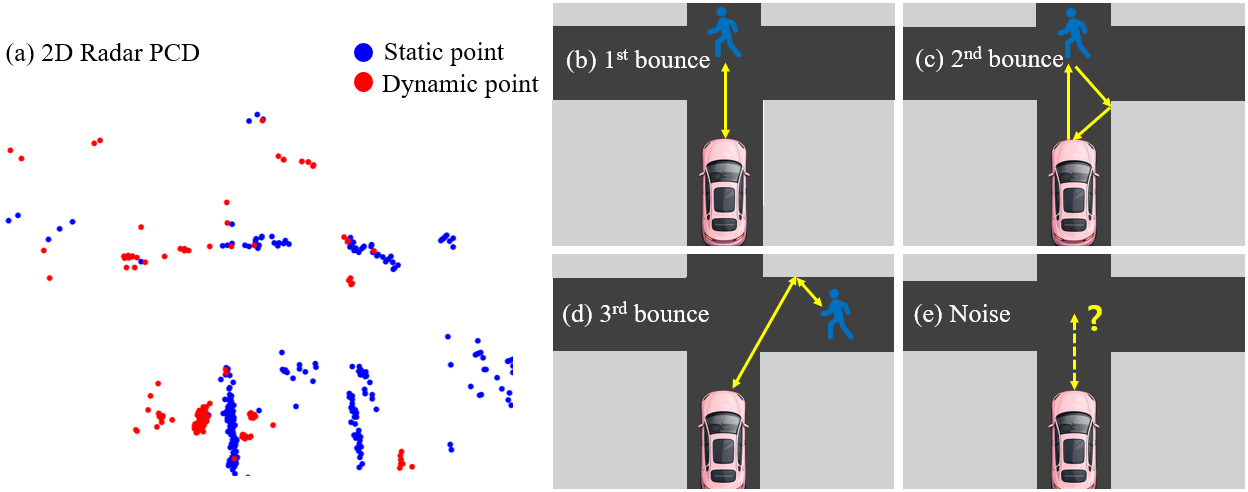

Technical challenges of radar PCD in ego-dynamic environments with multi-bounce reflections.

However, achieving reliable NLOS perception using mmWave radar Point Cloud Data (PCD) presents several technical hurdles. First, radar observations in ego-dynamic conditions are inherently sparse and frequently corrupted by environmental clutter and measurement noise. Second, because radar signals propagate through multi-bounce reflection paths (1st, 2nd, and 3rd order), the perceived objects appear spatially distorted from their actual locations. This complexity requires the system to accurately estimate reflective surfaces and reconstruct physically valid propagation trajectories to overcome structurally inconsistent noise and contextual ambiguity.

Key Contributions

- Practical NLOS Framework: We propose a robust learning framework that enables precise NLOS pedestrian localization specifically designed for moving ego-vehicles.

- Reflection-aware Reasoning: By integrating multimodal feature fusion with physics-guided ray tracing, our method effectively recovers distorted radar propagation trajectories.

- Real-world Validation: We demonstrate state-of-the-art performance in complex outdoor intersections, achieving a localization accuracy of 1.23m under dynamic driving conditions.